Splitwise is proud to present Cacheable, a gem designed to facilitate and normalize method caching.

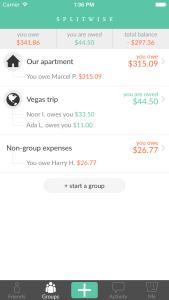

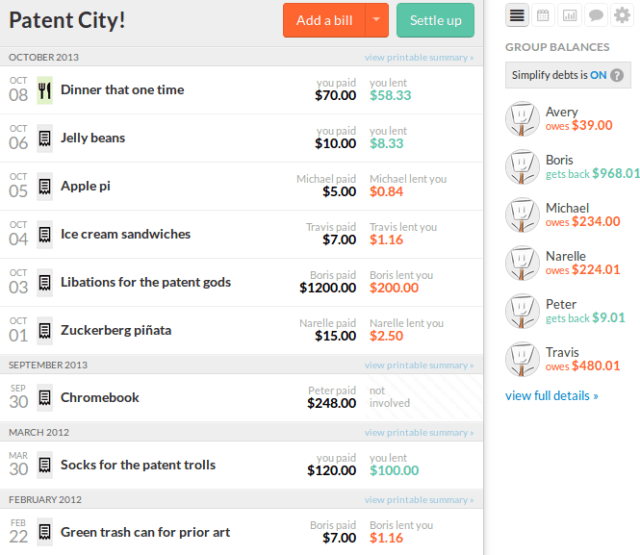

Splitwise is designed to take the stress out of sharing expenses. If people think we’re doing a good job, they’re going to put more expenses in Splitwise. This is great! But eventually, a Splitwise group has so many expenses that it takes a non-trivial amount of time to calculate the total balance. We don’t want a server that is taking time to crunch numbers to increase stress, so we needed to speed this process up. Since balances only change when there is activity in the group, we decided to solve this classic space/time trade off by caching the most recent balance calculation.

While our performance improved, our code developed a smell. Caching logic had to be custom added into any method that could benefit from it. Not only was our code no longer DRY (Don’t Repeat Yourself), we were noticing occasional inconsistencies at runtime, requiring cache recalculation. This was not overly surprising, as we all know that cache invalidation is one of the two hard things. But because correct balances are the core of Splitwise, we need them to work correctly all of the time.

We continued to tweak the caching logic, built workarounds, and even added conditional caching clauses to avoid race conditions. But we still had problems. As if this wasn’t frustrating enough, the extra caching code made our tests more and more complicated. We had intended to pay for time (a faster better user experience), with space (memory for cache), but also received the hidden fee of increased maintenance costs. There simply had to be a better way.

We decided to step back and reexamine our first principles, specifically the single responsibility principle (SRP). While it’s primarily for modules and classes, it can be applied to methods too. Our method for computing the balance should not be responsible for caching at all — not checking, validating, or populating, conditionally or not. We began to think, “What if we could extract the caching logic from the method? What if the method was only responsible for the balance, and the caching could be tested and built separately?”

As an interpreted language, Ruby makes method composition easy to accomplish with metaprogramming, or code that writes code. A good deal of the incredible functionality found in Rails and other gems comes from Ruby’s embrace of metaprogramming. If you’ve used Ruby for any amount of time, you’ve likely encountered metaprogramming whether you knew it or not because it is typically small, unobtrusive, and well encapsulated. In our case, we extracted the caching logic from our methods and moved it to a dedicated module. This module can then be included in other classes and a single directive used to initiate the metaprogramming which will wrap the indicated methods with caching behavior.

Previously, each method, in addition to calculating data and caching, would not only create its own key to use in the cache, but be responsible for conditionally clearing the cache. How should a unified caching system handle something as hard and thorny as cache invalidation? As with many things, Rails has an opinion. Rails uses key-based cache expiration which ties the generated cache key to the object’s state. ActiveRecord has a method called `cache_key` for this which creates the key from the object’s class, id, and updated_at timestamp. Put simply, when an object is created or updated in the database, its cache key changes. The fresh key has no value in the cache and the old cached value is associated with a key that is no longer generated. When combined with many cache systems that remove the least recently used (LRU) values over time or as they run out of space, you get an effective way to sidestep the cache invalidation problem.

This was a good starting point, as we had decided our caching library should have a general opinion on the format of the key for ease of use and that it should be easily modified. By default, the key under which a cacheable method stores its value is made up of the result of `cache_key` (or the class’ name if undefined) and the method being called. This allows an easy point of modification where `cache_key` can be overwritten to provide any details necessary about the instance being cached. Finally, a method’s result depends on its arguments. However, its cache key may not. Since there wasn’t good default behavior here, we added the ability to specify a `key_format` proc when invoking Cacheable so that the key can be fine tuned using the instance, method name, and method arguments.

We noticed benefits as soon as we began writing the code. The balance calculating methods were DRYing back up, and now so was the caching logic. In addition, the code was getting more and more maintainable. It became easier to read, test, and modify as the disparate responsibilities were pulled apart and isolated. With the caching logic encapsulated, it also became trivial to add caching to additional methods and peer review these changes as often, only a single line of code was required.

It’s no small victory increasing your developers’ productivity and happiness, but how does it stack up in production? I’m happy to say that simply untangling the caching code from business logic dropped our error rate by an order of magnitude. Instead of a handful of inconsistent cache hits and necessary recalculations a day, now we saw the same or fewer a month. Clarity has its benefits.

We didn’t know it beforehand, but we learned we had stumbled onto aspect-oriented programming (AOP). I am no expert in AOP, but this foray showed it to be a useful tool to reduce the cost of code maintenance. It allowed us to separate the code, and thus the responsibilities for aspects, or behaviors such as caching and calculating balances, and composing new methods from modules. These aspects are able to be more thoroughly and reliably tested independently of each other. Increasing code reliability saves us maintenance by avoiding it entirely. In addition, the aspects are easier to understand when independent and take less time to maintain and augment without risk of regression.

We make use of many wonderful open source projects every day and strongly believe in giving back to the community. We’ve found this abstraction to be easy to use and love how it has increased our code’s reliability. It’s ready for out-of-the-box use with Rails and we’d love for you to try it out and let us know what you think. The GitHub repository has more information in addition to implementation instructions and examples.

You must be logged in to post a comment.